Since there were no hits during the missing hours, there is nothing to tell us whether our API endpoint was available or not. If the API was available at the end of the hour then the status is reported as UP and conversely, if the API was unavailable then the status is reported as DOWN. The status is the state of the API endpoint at the end of each hour. However the “status” column is still empty for these missing hours. We can see that the “missing hours” now have rows of zeroes which tells us that there were no activity during these hours rather than ambiguously not including them. We then get an updated table that looks like this: | fillnull total_number_of_hits, successful_hits, unsuccessful_hits | timechart values(total_number_of_hits) as total_number_of_hits, values(successful_hits) as successful_hits,values(unsuccessful_hits) as unsuccessful_hits,values(status) as status span=1hr The Splunk output describes why the null-hypothesis was rejected in the ‘Test Decision’ column.Source="test_API_data.csv" host="test_API_data" index="main" sourcetype="csv" Our null hypothesis in this case was that the Selling Price of Partition 0 and Selling Price of Partition 1 have different means. Similar to the one-sided t-test given our extremely large p-value and alpha of 0.1 we can definitely reject our null-hypothesis when we run this unpaired t-test. I picked an alpha of 0.1 | score ttest_ind Selling_Price_1 against Selling_Price_0 alpha=0.1 We can now run the t-test by appending the below SPL to our search. The result of the SPL will show two columns Selling_Price_0 and Selling_Price_1. | rename Selling_Price as Selling_Price_1 ] | search partition_number=1 | fields Selling_Price | fillnull value=0 | sample seed=2607 partitions=3 | inputlookup car_data.csv | eval dummy_ = 1 Using the data loaded earlier, we can use eval to create dummy variables for the ‘Seller_Type’,’Transmission’,’Fuel_Type’ columns. It splits each field into separate fields based on the unique number of categorical values available in that column.ġ – Splunk: Creating dummy variables using Eval Command It’s an eval function that can help create dummy variables for our categorical fields. Now that we have loaded some data lets go over the first useful command. After onboarding the csv file use the ‘| inputlookup car_data.csv’ command to view the data. I added the csv file to my $SPLUNK_HOME/etc/apps/Splunk_MLTK/lookups/ directory. Click here to download the car_dataset.csv file to follow this blog.

I downloaded this dataset about predicting used-car prices based on several factors km_driven, field_type, seller_type etc from kaggle. Before we begin – Let’s onboard some data in Splunk It provided metrics that are commonly used in the Data Science world for model verification and performance. This was a much awaited upgrade in Splunk MLTK when it was released. It was released with MLTK version 4.0.0 and is packed with statistics such as AUC (Area Under the Curve) for classification models for model validation and ANOVA (Analysis of Variance) for linear regression models. One of my favourite features in Splunk MLTK is the ‘Score’ command.

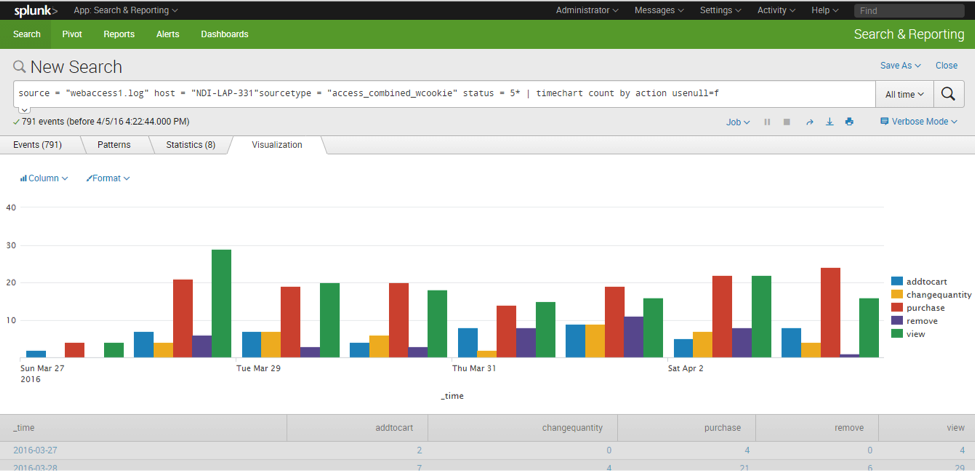

I attempt to highlight commands that have helped in some data science or analytical use-cases in this blog. With each new release of the Splunk or Splunk MLTK a catalog of new commands are available. This blog sheds light on some features and commands in Splunk Machine Learning Toolkit (MLTK) or Core Splunk Enterprise that are lesser known and will assist you in various steps of your model creation or development. The Splunk Machine Learning Toolkit is packed with machine learning algorithms, new visualizations, web assistant and much more.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed